What Is High Dynamic Range (HDR)? It's become the 'next big thing' in the TV space, with movies and console games being developed to support this new technology. Now HDR has started to be more widely adopted in the desktop monitor space as well and we are

What is HDR?

You will have heard the term HDR talked about more and more in the TV market over the last year or so, with the major TV manufacturers launching new screens supporting this feature during 2016. It's become the 'next big thing' in the TV space, with movies and console games being developed to support this new technology. Now HDR has started to be more widely adopted in the desktop monitor space as well and we are starting to see more and more talk of HDR support, including at the recent CES 2017 event in Las Vegas. This will address the PC gaming monitor market directly, as well as those who are after an all-in-one multimedia display for console gaming, movies and PC use as opposed to venturing in to the TV market. We thought it would be useful to take a step back and look at what exactly HDR is, what it offers you, how it is implemented and what you need to be aware of when selecting a display for HDR content. We will try to focus here more on the desktop monitor market than get too deep in to the TV market, since that is our primary focus here at TFT Central.

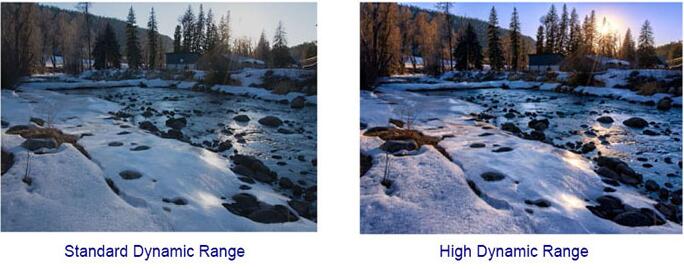

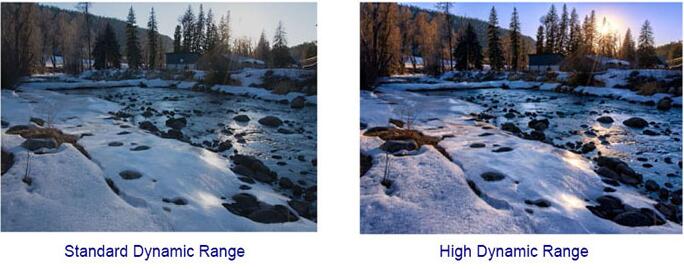

Trying to put this in simple terms, 'High Dynamic Range' refers to the ability to display a more significant difference between bright parts of an image and dark parts of an image. This is of significant benefit in games and movies where it helps create more realistic images and helps preserve detail in scenes where otherwise the contrast ratio of the display may be a limiting factor. On a screen with a low contrast ratio or one that operates with a "standard dynamic range" (SDR), you may see detail in darker scenes lost, where subtle dark grey tones become black. Likewise in bright scenes you may lose detail as bright parts are clipped to white, and this only becomes more problematic when the display is trying to produce a scene with a wide range of brightness levels at once. NVIDIA summarizes the motivation for HDR nicely in three points: "bright things can be really bright, dark things can be really dark, and details can be seen in both". This helps produces a more 'dynamic' image, hence the name. These images are significantly different, providing richer and a more 'real' images than standard range displays and content.

Note that pictures provided in this article showing Standard vs High Dynamic Range are for indicative purposes only, as it's not possible to truly capture the affects and then view them on a standard range display. So they are intended as a rough guide to give you an idea of the differences.

High Dynamic Range Rendering

A typical desktop monitor based on a TN Film or IPS technology panel can offer a real-life static contrast ratio of around 800 - 1200:1, while a VA technology panel can range between 2000 and 5000:1 commonly. The human eye can perceive scenes with a very high dynamic contrast ratio, around 1 million:1 (1,000,000:1). Adaptation to altering light is achieved in part through adjustments of the iris and slow chemical changes, which take some time. For instance, think about the delay in being able to see when switching from bright lighting to darkness. At any given time, the eye's static range is smaller, at around 10,000:1. However, this is still higher than the static range of most display technologies including VA panels and so this is where features like HDR are needed to extend that dynamic range.

Achieving High Dynamic Range and Improving Contrast Ratio

You will probably be familiar with the term "dynamic contrast ratio" (DCR), a technology which has been around now for many years and is widely used in the monitor and TV market, although something which has fallen out of favour in more recent times. Dynamic contrast ratios are based on the ability of a screen to brighten and dim the backlight unit (BLU) all at once depending on the content on the screen. This "global dimming" operates in brighter scenes by turning the backlight up, and in darker scenes turning it down. Sometimes the backlight will even be turned off completely if the scene is black. This allows manufacturers to promote their extremely high dynamic contrast ratio specification numbers, where they can then compare the difference between the brightest white (at maximum backlight intensity) vs. the darkest black (where the backlight is turned right down, and sometimes even turned off). This technique became very prevalent and we started to see crazy DCR numbers being quoted by screen manufacturers, up in the millions:1. In reality, the regular altering of the backlight intensity can prove distracting in real-life and many people didn't like the feature at all and just turned it off. It didn't really do a great deal to extend the dynamic range or perceived contrast ratio as no matter what the active backlight intensity was, you were still left with the same static contrast ratio at any one point in time, and therefore the differences at that point in time between dark and bright were still the same.

In more recent times, to try and overcome some of the ongoing contrast ratio limitations of LCD displays you will often hear the term "local dimming" used by manufacturers. This local dimming can be used to dim the screen in "local" parts, dimming regions of the screen that should be dark, while keeping the other areas bright as they should be. This can help improve the apparent contrast ratio and bring out detail in darker scenes and shadow content.

The optimal way to deliver this local dimming is via a "full-array" backlight system (pictured above), where an array of individual LEDs behind the LCD panel are used to light the display, as opposed to using any kind of edge-lit backlight. In the desktop monitor market, edge-lighting is by far the most common method, but in the TV market full-array backlight methods have become more common. It would be ideal for each LED to be controlled individually, but in reality they are split in to separate "zones" and locally dimmed in that way. Most manufacturers will not disclose how many zones are being used, but it could be in the dozens commonly. There are some high end reference TV's that use a very high number of zones - 384 in fact. Each zone is responsible for a certain area of the screen, although objects smaller than the zone (e.g. a star in the night sky) will not benefit from the local dimming and so may look somewhat muted. Sometimes you will see "blooming" as well, where one zone is lit, but an adjacent zone is not and a halo/bloom appears as a result. The more zones, and the smaller these zones are, the better.

There are some drawbacks of implementing full-array backlights. They are firstly far more expensive to utilise than a simple edge-lit backlight, and so expect to see retail costs of these supporting displays very high. For example the first announced desktop HDR display featuring a full array backlight system (384 zones) was the 27" Asus ROG Swift PG27UQ which has an expected retail price of around £2000 GBP at the moment. The full-array backlight can't be blamed on its own for this high price, as this new display is ground-breaking in terms of some of its other features like 4K resolution at 144Hz refresh rate, G-sync support, Quantum Dot etc. However, you can bet that the use of a full-array backlight system with 384 zones is a large part of the production costs, and reason for the high retail price. The full array LED backlights also require more depth to the screen than an edge-lit backlight so we will see a step back from the ultra-thin profiles that have started to become the norm when these are first introduced. There are other HDR capable displays being advertised in the desktop monitor space, but these may operate in other ways and not use this kind of full-array backlight so keep that in mind.

It's worth noting that some LCD TV's also use a more basic and limited "edge-lit local dimming". In this method all the LED's are situated along the edge of the TV facing the centre of the screen. In some cases this can offer some improvement over a non local dimmed display, although not nearly as much as with full-array dimming and often it doesn't help at all. It can sometimes even make the image look worse if large areas of the screen are being dimmed at once. This can be influenced by the location of the LEDs, whether they are along all 4 edges or just the top/bottom or left/right sides for instance. This is often the only option where there are power limitations or where a thinner form factor is necessary like in some TV's and certainly in laptops. You will see some displays, certainly in the TV space already, where the screen is edge-lit but HDR is still promoted. That might be pushing it in many cases we feel, so keep in mind how a display is being lit when considering HDR.

Content and HDR10

HDRR has been prevalent in games, including from PC graphics cards and from leading games consoles including the new Playstation 4 Pro and X Box One S. A wide range of compatible graphics cards exist and while many will support HDRR, keep in mind that this will require additional power and may be a strain on some older cards even if they are technically compatible. There are also a decent range of games available which will support HDRR if you have a compatible display to really make use of the feature. Elsewhere, you would need HDR certified material for HDR movie viewing. There is a new generation of Ultra HD Blu-ray which can support this if required, along with improvements made by the likes of Netflix and Amazon Prime when it comes to streaming content in HDR. Note that not all Ultra HD Blu-rays necessarily support HDR, but the functionality can be included in the disc if the creators want as there is additional capacity available to do so. If you play a HDR disc on a standard dynamic range display, it will just play the content in SDR, since the HDR metadata is just layered on top, and gets ignored on an SDR display. So that part is nice and simple thankfully.

In August of 2015, the Consumer Technology Association (CTA) announced an industry definition for “HDR Compatible Displays” which also included the definition of the HDR10 Media Profile. While other HDR formats exist, HDR10 has been the primary choice for game developers offering HDR extensions of their content for PC and console platforms. Supporting HDR10 under the Windows environment requires application and graphics driver changes but you will often see this format being mentioned by graphics card manufacturers and games developers. So HDR10 is the most widely adopted HDR standard from a content point of view and is an open source format. Most of the major TV manufacturers have adopted this format and the game developers, console manufacturers (inc the Xbox One S and PS4 Pro) and movie producers seem to be doing the same. As a standard, HDR10 should offer a current 1000 cd/m2 peak luminance target. It should also offer 10-bit colour depth and cover the colour space defined within the Rec.2020 spec.

HDR Standards and Certification

To stop the widespread abuse of the term HDR, and a whole host of misleading advertising and specs, the UHD Alliance was set up. This alliance is a consortium of TV manufacturers, technology firms, and film and TV studios. Before this, there was no real defined standards for HDR and there were no defined specs to be worked towards by display manufacturers when trying to deliver HDR support to their customers. On January 4, 2016, the Ultra HD Alliance announced their certification requirements for a true HDR display with a focus at the time on the TV market since HDR had not started to appear in the monitor market. This encapsulates the standards defined for "true" (in their view) HDR support, as well as then defining several other key areas manufacturers can work towards if they want to certify a screen overall under their brand as "Ultra HD Premium". This Ultra HD Premium certification spec primarily focuses on two areas, contrast and colour.

Contrast / Brightness / Black Depth

There are two options manufacturers can opt for to become certified under this standard, accounting for both LCD and OLED displays. This covers the specific HDR aspect of the certification:

Option 1) A maximum luminance ('brightness' spec) of 1000 cd/m2 or more, along with a black level of less than 0.05 cd/m2. This would offer a contrast ratio then of at least 20,000:1. This specification from the Ultra HD alliance is designed for LCD displays and at the moment, is the one we are concerned with here at TFT Central.

Option 2) A maximum luminance of over 540 cd/m2 and a black level of less than 0.0005 cd/m2. This would offer a contrast ratio of at least 1,080,000:1. This specification is relevant then for OLED displays. At the moment, OLED will struggle to produce very high peak brightness, hence this differing spec. While it cannot offer the same high brightness that an LCD display might, its ability to offer much deeper black levels allows for HDR to be practical given the very high available contrast ratio.

In addition to the HDR aspect of the certification, several other key areas were defined if a manufacturer wants to earn themselves the Ultra HD Premium certification:

Resolution - Given the name is "Ultra HD Premium" the display must be able to support a resolution of at least 3840 x 2160. This is often referred to as "4K", although officially this resolution is "Ultra HD", and "4k" is 4096 x 2160.

Colour Depth Processing - The display must be able to receive and process a 10-bit colour signal for improved bit-depth. This offers the capability of handling a signal with over 1 billion colours. In the TV world you will often see TV sets listed with 10-bit colour or perhaps "deep colour". This 10-bit signal processing allows for smoother gradation of shades displayed and since the TV doesn't necessarily need to be able to display all these colours, only process the 10-bit signal, it's not really an issue.

Colour Gamut - As part of this certification, the Ultra HD alliance stipulate that the display must also offer a wider colour gamut beyond the typical standard gamut backlights. In the TV space, this would need to be beyond the standard sRGB / Rec. 709 colour space (offering 35% of the colours the human eye can see) which can only cover around 80% of the required gamut for the certification. The display needs to support what is referred to in the TV market as "DCI-P3" cinema standard (54% of what the human eye can see). This extended colour space allows for a wider range of colours from the spectrum to be displayed and is 25% larger than sRGB (i.e. 125% sRGB coverage). In fact, it is a little beyond Adobe RGB which is ~117% sRGB. As a side note, there is an even wider colour space defined which is called BT. 2020 and this is considered an even more aggressive target for display manufacturers for the future (~76% of what the human eye can see). To date, no consumer displays can reach anywhere near even 90% of BT. 2020, although many HDR content formats use it as a container for HDR content as it is assumed to be future proof. This includes the common HDR10 format. One to look out for in future display developments.

Connectivity - A TV would require an HDMI 2.0 interface. This certification is designed for the TV market at the moment, but in the desktop monitor market DisplayPort is the common option, and certainly needed for the higher refresh rates (>60Hz) supported. We wouldn't be surprised if this Ultra HD Premium certification perhaps got updated at some point to incorporate DisplayPort to allow it to be widely adopted in the monitor market as well.

Displays which officially reach these defined standards can then carry the 'Ultra HD Premium' logo which was created specifically for this cause. You need to be mindful that not all displays will feature this logo, but may still advertise themselves as supporting HDR. The HDR spec is only one part of this certification so it is possible for a screen to support HDR in some capacity, but not necessarily offer the other additional specs (e.g. maybe it doesn't have the wider gamut support). Since a screen may be advertised with HDR, but not carry this Ultra HD Premium logo, it may be unclear how the HDR is being implemented and whether it can truly live up to the threshold specs that the UHD alliance came up with. In those situations you may get some of the benefits from HDR content, but not the maximum, full experience intended or defined here. HDR is just one part of the Ultra HD Premium spec, so you may well see HDR talked about without the rest of the certification spec being adhered to. That's where it may get confusing, but rest assured that if a display features this certification and logo it has "fully fledged HDR", at least in terms of how the Ultra HD Alliance perceive it should be, as part of its spec.

So to try and summarise and simplify a little. HDR technically just refers to the extended contrast ratio support offered by using a local dimming technique of some sort, preferably a full-array backlight. So where HDR is advertised, it might only be talking about this ability to offer a higher dynamic range and improved contrast. In the TV space, manufacturers tend to use the term HDR to also encapsulate higher resolution (beyond 1920 x 1080 HD, so normally 3840 x 2160 Ultra HD) and also improved colours with a wider gamut. The resolution and colour space aren't really part of HDR per se, but they get bundled in with the term by TV manufacturers. To try and cover all this in one certification, where defined standards need to be met, particularly when it comes to the HDR part of the spec, the Ultra HD alliance came up with their Ultra HD Premium logo. We hope that makes sense...just about!

HDR in the Desktop Monitor Market

We've already started to see the term HDR used in desktop monitors now, with its use becoming more prevalent in press releases for forthcoming displays. For instance there is the LG 32UD99 (pictured above), which talks about supporting Ultra HD resolution, 95% of the DCI-P3 colour space (so close to the requirement) and support for the HDR10 standard. However, the spec and press material doesn't talk about how or if local dimming is used, and we assume there is no full-array backlight being implemented here for a start. The "typical brightness" is listed at 350 cd/m2 with "peak brightness" at only 550 cd/m2 so it doesn't conform to the 1000 cd/m2 minimum brightness standard for the Ultra HD Premium certification or HDR10 content target. This is odd since LG specifically talk about HDR10 support in their features. So in this instance, it looks like HDR is being offered in some capacity, but not the full HDR experience and there's some question marks around how it will perform. LG use the following "HDR for PC" logo in their spec information.

Even more confusing is the use of the term HDR for the forthcoming Dell S2718D monitor. Dell's press release for this screen says at the bottom: "Dell’s HDR feature has been designed with a PC user in mind and supports specifications that are different from existing TV standards for HDR. Please review the specs carefully for further details." So at least they seem to be open about saying it might not be the "full HDR" experience people are perhaps expecting or wanting. This screen offers only a 2560 x 1440 resolution, 400 cd/m2 brightness and only a normal 99% sRGB / Rec. 709 colour space. There's no talk about local dimming or anything so it is anyone's guess what they are offering here for so-called HDR support. Certainly none of the specs seem to line up with anything remotely near the longer-standing TV standards for HDR which you would perhaps hope manufacturers would at least aim for.

Then there's the BenQ SW320 (also pictured above), a reference grade screen designed really for professional photography users. They talk about HDR support in their spec for this screen and some aspects of the performance do seem to adhere to the requirements (as defined already in the TV market at least). There's an Ultra HD resolution, 10-bit colour depth support and colour space coverage for 100% DCI-P3. The brightness spec however is only 350 cd/m2 so again there are question marks here about the operation of the HDR support.

So for now, at least with the majority of the announced "HDR" displays in the desktop monitor market, there are a range of specs being offered and no true standards being worked towards by the manufacturers. This is a similar situation to the TV market when HDR first started to emerge there, and one of the reasons why the Ultra HD alliance then set up their certification standards to try and offer some standardisation. At some point in time, something similar needs to be introduced for the desktop monitor market, whether that's an adoption/tweak of the Ultra HD alliance's "Ultra HD Premium" standard, or something else. It looks like the two main graphics card manufacturers in this sector have their own ideas about certification and standards for HDR as detailed below. For now, we would advise caution when hearing the term "HDR" used in the desktop monitor market as it really does seem to be very varied so far. We will try to comment on this as and when we publish news/reviews for any HDR-advertised displays.

NVIDIA's Approach and the Asus ROG Swift PG27UQ

In January 2017 NVIDIA announced their new generation of G-sync in development. G-sync is a technology used to offer variable refresh rate support for compatible graphics cards and displays, helping to improve gaming performance and avoid issues like tearing and stuttering where frame rates in games can vary. Their new generation of G-sync focuses on providing support for HDR as well and is referred to as "G-sync HDR". They have produced this technology in partnership with panel manufacturer AU Optronics. Unlike HDR compatible TV's, G-sync HDR monitors have been designed from the ground up, combining the benefits of G-sync with this new support for HDR content and therefore avoiding most of the input lag typically seen from a TV display. Furthermore, and perhaps most importantly for the HDR aspect of the display, it seems that the new G-sync HDR screens will incorporate a full array 384-zone backlight system for maximum local dimming performance and an optimal HDR experience. At least those talked about so far do.

Along with the support for HDR, it looks like NVIDIA are trying to work towards the defined Ultra HD Premium standard as well. NVIDIA G-sync HDR displays will offer a colour gamut very close to the DCI-P3 reference colour space. They will provide the necessary wider gamut support (~125% sRGB) by making use of the recently developed Quantum Dot technology. Quantum Dot probably deserves an article on its own (coming soon) but to give you a brief overview, a Quantum Dot Enhancement Film (QDEF) can be applied to the screen to create deeper, more saturated colours. First used on high-end televisions, QDEF film is coated with nano-sized dots that emit light of a very specific colour depending on the size of the dot, producing bright, saturated and vibrant colours through the whole spectrum, from deep greens and reds, to intense blues. It's a more economical, modern way of offering an extended colour gamut beyond the typical sRGB space without the need for an entirely different (and more expensive) wide gamut LED backlight. As such, you will see Quantum Dot technology used on a wide range of screens in all sectors, not just in the professional space where wide gamut backlights are sometimes used. Mainstream, multimedia and gaming screens can make use of Quantum Dot if the manufacturers choose and it is also independent of panel technology or backlight type. It can be added to a normal W-LED backlight screen to boost the gamut and colours, or it can also be added to a screen with a full-array backlight like those being developed in the G-sync HDR range discussed here. As a side note, having Quantum Dot does not necessarily mean the screen can support HDR. You will see many more Quantum Dot displays around already which do not offer HDR support, and certainly not any kind of full-array backlight. Those displays have used Quantum Dot to simply improve the colour gamut and offer more vivid and saturated colours, often desirable for gaming and multimedia. For HDR displays, Quantum Dot is just a method used commonly to bring about the colour gamut improvements necessary to meet the Ultra HD Premium standard as well. So as well as the support for HDR through the full-array backlight system with local dimming, NVIDIA are combining it with Quantum Dot technology to bring about a wider colour space.

The only display announced in any detail so far in this new "G-sync HDR" range is the 27" Asus ROG Swift PG27UQ. This model offers a 3840 x 2160 Ultra HD resolution and so again conforms to the Ultra HD Premium requirements. The spec also lists a 1000 cd/m2 brightness which is necessary for the HDR element of the certification. The colour depth is not listed but it should have no trouble processing a 10-bit input signal anyway. The input options are the only grey area for this certification, as the screen will be designed primarily to use DisplayPort 1.4, since that is needed to drive the 3840 x 2160 resolution at the maximum supported 144Hz refresh rate. So in the desktop monitor space, the HDMI 2.0 requirement for the certification is not really important like it is in the TV market. So for all intents and purposes, this Asus display looks to meet the Ultra HD Premium standards, and so could well carry that certification on release. An Acer Predator range display is also mentioned in NVIDIA's press material for G-sync HDR, although details for that model are currently not known.

It's likely that the NVIDIA G-sync HDR displays are working towards meeting the already existing 'Ultra HD Premium' standards, although knowing NVIDIA, they may well introduce their own "better" standard for a screen to be "G-sync HDR" certified. Their whitepaper talks about how a "true HDR display needs a thoughtfully engineering combination of: higher brightness, greater contrast, wider colour gamut and higher refresh rate". While the first 3 elements are part and parcel of the Ultra HD Premium spec, the latter is NVIDIA's own addition to the list and presumably fits in with their use of G-sync and the drive to develop high refresh rate (>60Hz) displays. The aforementioned Asus ROG Swift PG27UQ features a 144Hz refresh rate for instance. So perhaps rather than adopt the Ultra HD Premium logo, suitable displays will instead carry an "NVIDIA G-sync HDR" logo. Time will tell.

As a side note, from a graphics card point of view, NVIDIA Maxwell and Pascal GPUs support HDR10 output over DisplayPort and HDMI and NVIDIA is continually monitoring and evaluating new formats and standards as they emerge.

AMD's Approach and FreeSync 2

AMD have recently announced their latest development to their FreeSync variable refresh rate technology which has been around now since 2015. FreeSync 2 as it's being called is now just about variable refresh rate, but accounts for High Dynamic Range (HDR) as well. It's not designed to be a replacement for FreeSync, more of a side development focusing on what AMD, its monitor partners and game development partners can do to improve high end gaming. It's more aimed at the high end gaming market due to the costs of developing this technology

Centre to this development is HDR support. As Brandon Chester over at Anandtech has discussed more than once, the state of support for next-generation display technologies under Windows is mixed at best. HiDPI doesn’t work quite as well as anyone would like it to, and there isn’t a comprehensive & consistent colour management solution to support monitors that offer HDR and/or colour spaces wider than sRGB. Recent Windows 10 updates have helped a bit, but that doesn't fix all the issues and obviously doesn't account for gamers still on older operating systems. Windows just doesn’t have a good internal HDR display pipeline and so this makes it hard to use HDR with Windows. The other issue is that HDR monitors have the potential to introduce additional input lag because of their internal processors required.

FreeSync 2 attempts to solve this problem by changing the whole display pipeline, to remove the issues with Windows and offload as much work from the monitor as possible. FreeSync 2 is essentially an AMD-optimized display pipeline for HDR and wide colour gamuts, helping to make HDR easier to use as well as performing better. This will also help reduce the latency and added input lag of the HDR processing. There's a detailed article over at Anandtech which talks more about the development requirements and further technical detail which is well worth a read.

Because all of AMD’s FreeSync 1-capable cards (e.g. GCN 1.1 and later) already support both HDR and variable refresh, FreeSync 2 will also work on those cards. All GPUs that support FreeSync 1 will be able to support FreeSync 2. All it will take is a driver update.

AMD is not setting any firm release dates and is not announcing any monitors at this time. They are only announcing the start of the FreeSync 2 initiative in general terms. They still need to finish writing the necessary driver code and bring on both hardware and software partners so it's likely to be a fair amount of time before it is properly introduced and available.

Conclusion

So to summarise as best we can, HDR as a technology is designed to offer more dynamic images and is underpinned by the requirement to offer improved contrast ratios beyond the limitations of existing panel technologies. This offers significant improvements in performance and represents a decent step change in display technology. There are various means of achieving this HDR support through the backlight dimming techniques available, although some are quite a lot more beneficial than others (i.e. full array backlights preferred). In the TV market, the HDR feature has been around for a year or so and is becoming more widely adopted with plenty of supporting game and movie content emerging. In the TV market, the manufacturers tend to also bundle in other spec areas when they talk about "HDR" for their screens. This includes typically higher than HD resolution (usually Ultra HD 3840 x 2160) and a wider colour space (typically around DCI-P3). Because there was so much widespread abuse of the term HDR emerging in the TV market, and so many different specs and standards being used, the Ultra HD alliance was set up to try and get a handle on things. They came up with their own 'Ultra HD Premium' certification which defines standards for HDR, colour performance, resolution and a few other areas. This seems to be the Gold standard in the TV market for identifying a true HDR TV.

The desktop monitor market is far more recent in its adoption of HDR. From a content point of view, it's easy enough to introduce from a graphics card and driver point of view, and we know the content is there already for external devices like Ultra HD Blu-ray players and modern games consoles. From a display point of view, things are less clear and we've already seen a wide range of differing interpretations of HDR, and different specs being offered. Achieving a true HDR experience, as it's been established now in the TV market seems confusing in the desktop monitor world right now. NVIDIA and AMD both have their own approach to standardising this, with NVIDIA's "G-sync HDR" further along in specification at the moment, and seemingly adhering nicely to the existing Ultra HD Premium certification standards from the TV market. We expect to see slightly different certifications introduced in the monitor world eventually, but we will likely be left in the same situation as the TV market for a while where different specs are being offered amongst the general (mis)use of the HDR term. Be careful when looking at monitors (and indeed TV's) where HDR is being promoted, as not all HDR is the same.